Markov Chains

- By Admin

- November 5, 2014

- Comments Off on Markov Chains

Theory

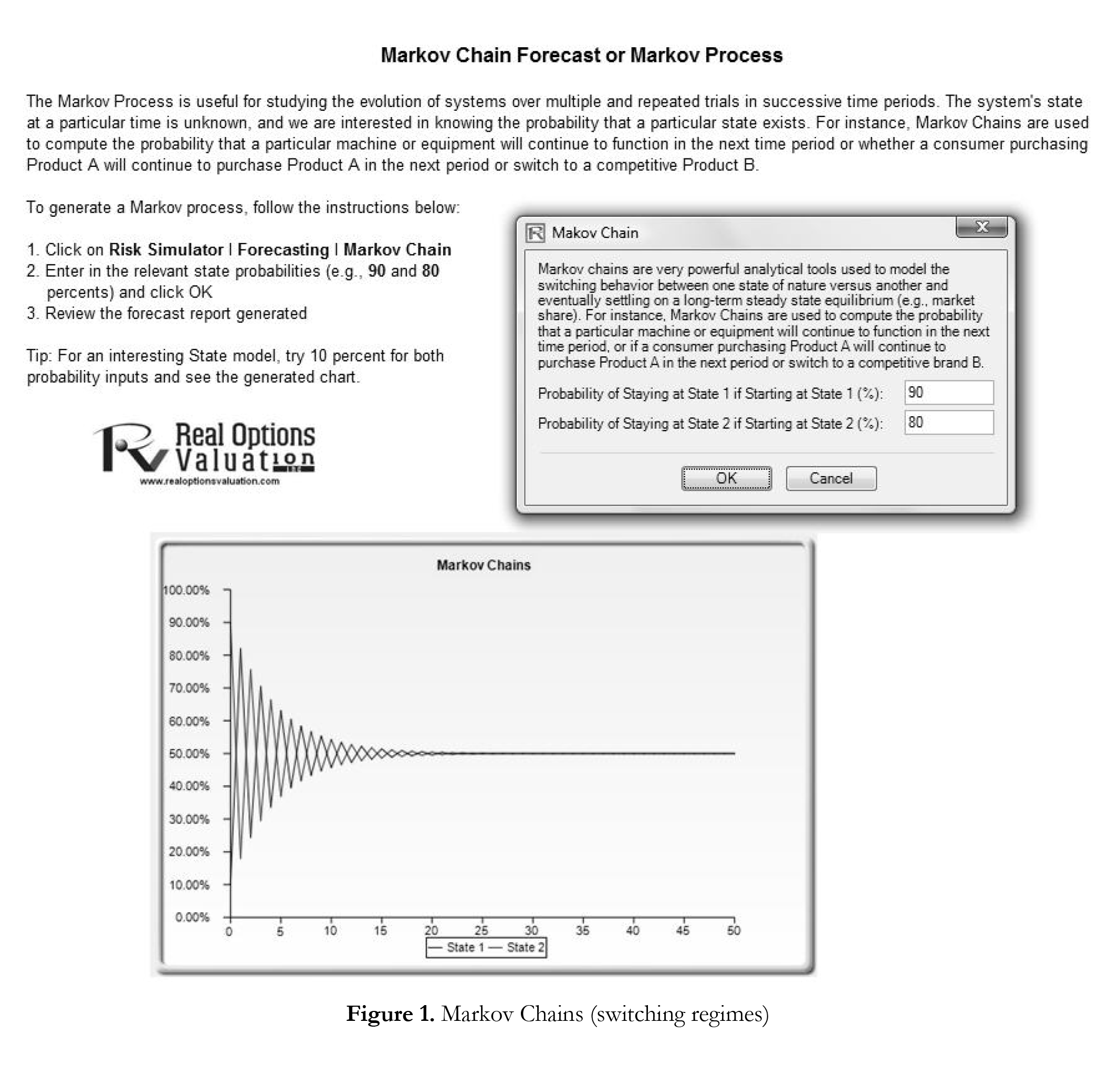

A Markov chain exists when the probability of a future state depends on a previous state and when linked together forms a chain that reverts to a long-run steady state level. This Markov approach is typically used to forecast the market share of two competitors. The required inputs are the starting probability of a customer in the first store (the first state) returning to the same store in the next period versus the probability of switching to a competitor’s store in the next state.

Procedure

Note

Set both probabilities to 10% and rerun the Markov chain, and you will see the effects of

switching behaviors very clearly in the resulting chart as shown at the bottom of Figure 1.

Recent Comments