Multivariate Regression, Part 1

- By Admin

- November 26, 2014

- Comments Off on Multivariate Regression, Part 1

Theory

It is assumed that the user is knowledgeable about the fundamentals of regression analysis.

The general bivariate linear regression equation takes the form of

![]()

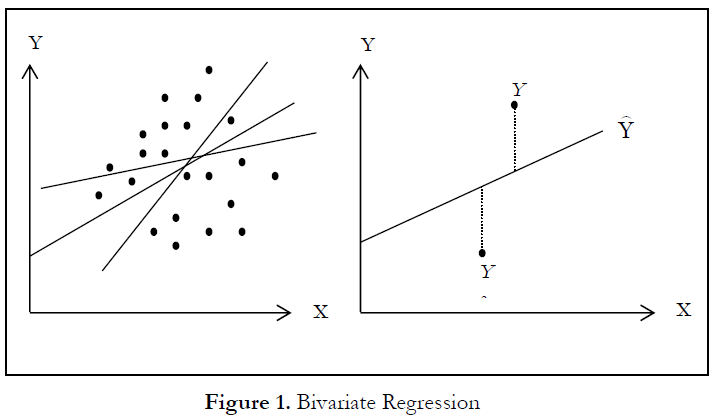

where β0 is the intercept,β1 is the slope, and ε is the error term. It is bivariate as there are only two variables, a Y, or dependent variable, and an X, or independent variable, where X is also known as the regressor (sometimes a bivariate regression is also known as a univariate regression as there is only a single independent variable X). The dependent variable is so named because it depends on the independent variable; for example, sales revenue depends on the amount of marketing costs expended on a product’s advertising and promotion, making the dependent variable “sales” and the independent variable “marketing costs.” An example of a bivariate regression is seen as simply inserting the best-fitting line through a set of data points in a two-dimensional plane, as seen on the left in Figure 1. In other cases, a multivariate regression can be performed, where there are multiple, or k number of, independent X variables or regressors, where the general regression equation will now take the form of

![]()

In this case, the best-fitting line will be within a k + 1 dimensional plane.

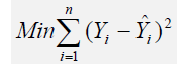

However, fitting a line through a set of data points in a scatter plot as in Figure 1 may result in numerous possible lines. The best-fitting line is defined as the single unique line that minimizes the total vertical errors, that is, the sum of the absolute distances between the actual data points (Yi) and the estimated line (Error! Objects cannot be created from editing field codes.), as shown on the right of Figure 1. To find the best-fitting unique line that minimizes the errors, a more sophisticated approach is applied, using regression analysis. Regression analysis, therefore, finds the unique best-fitting line by requiring that the total errors be minimized, or by calculating

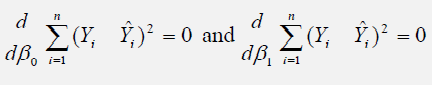

where only one unique line minimizes this sum of squared errors. The errors (vertical distances between the actual data and the predicted line) are squared to avoid the negative errors from canceling out the positive errors. Solving this minimization problem with respect to the slope and intercept requires calculating first derivatives and setting them equal to zero:

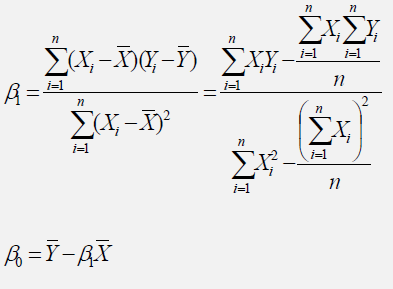

which yields the bivariate regression’s least squares equations:

For multivariate regression, the analogy is expanded to account for multiple independent variables, where

![]()

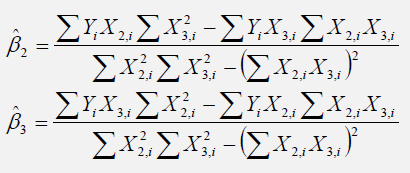

and the estimated slopes can be calculated by:

In running multivariate regressions, great care must be taken to set up and interpret the results. For instance, a good understanding of econometric modeling is required (e.g., identifying regression pitfalls such as structural breaks, multicollinearity, heteroskedasticity, autocorrelation, specification tests, nonlinearities, and so forth) before a proper model can be constructed.

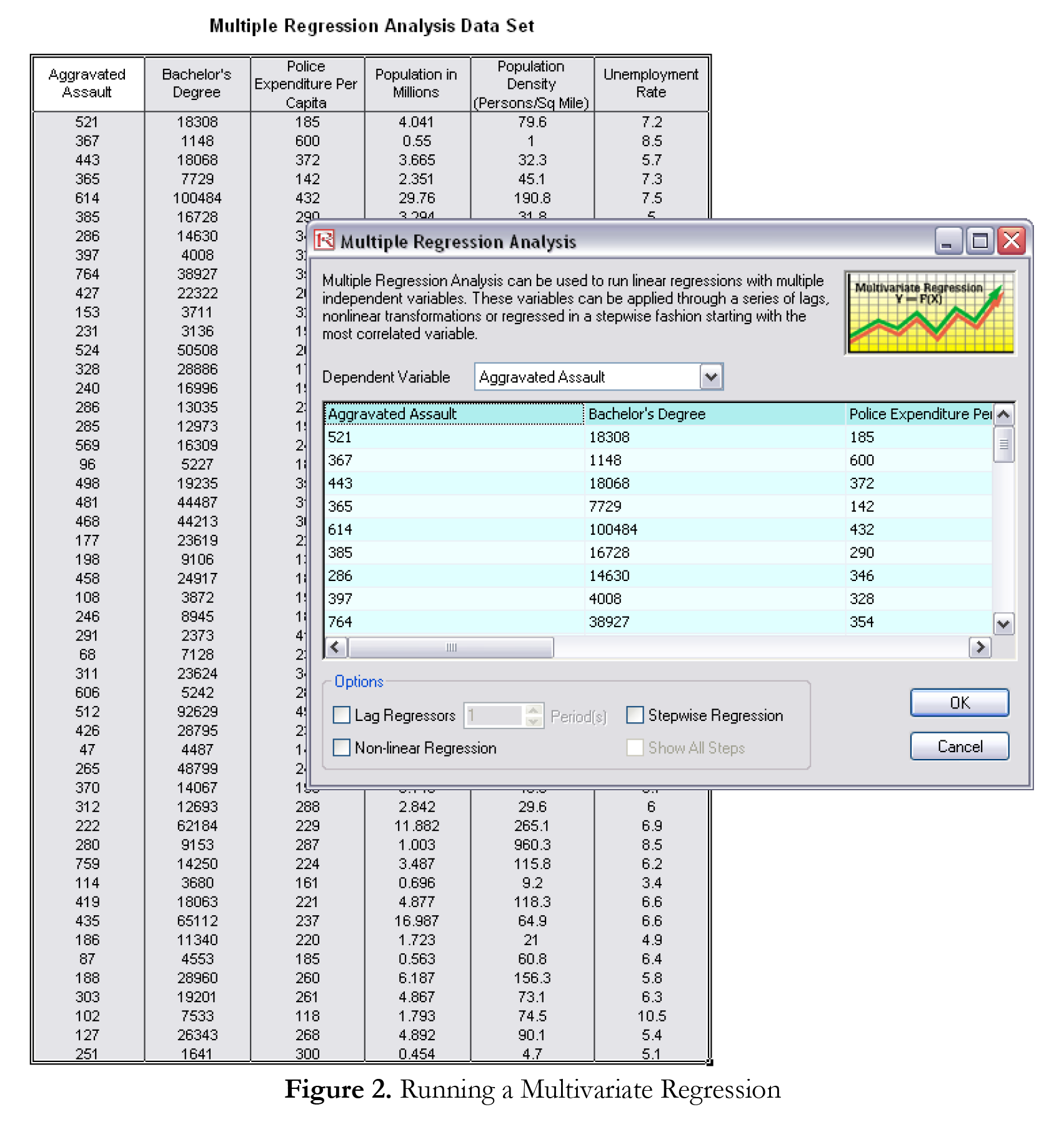

Procedure

Results Interpretation

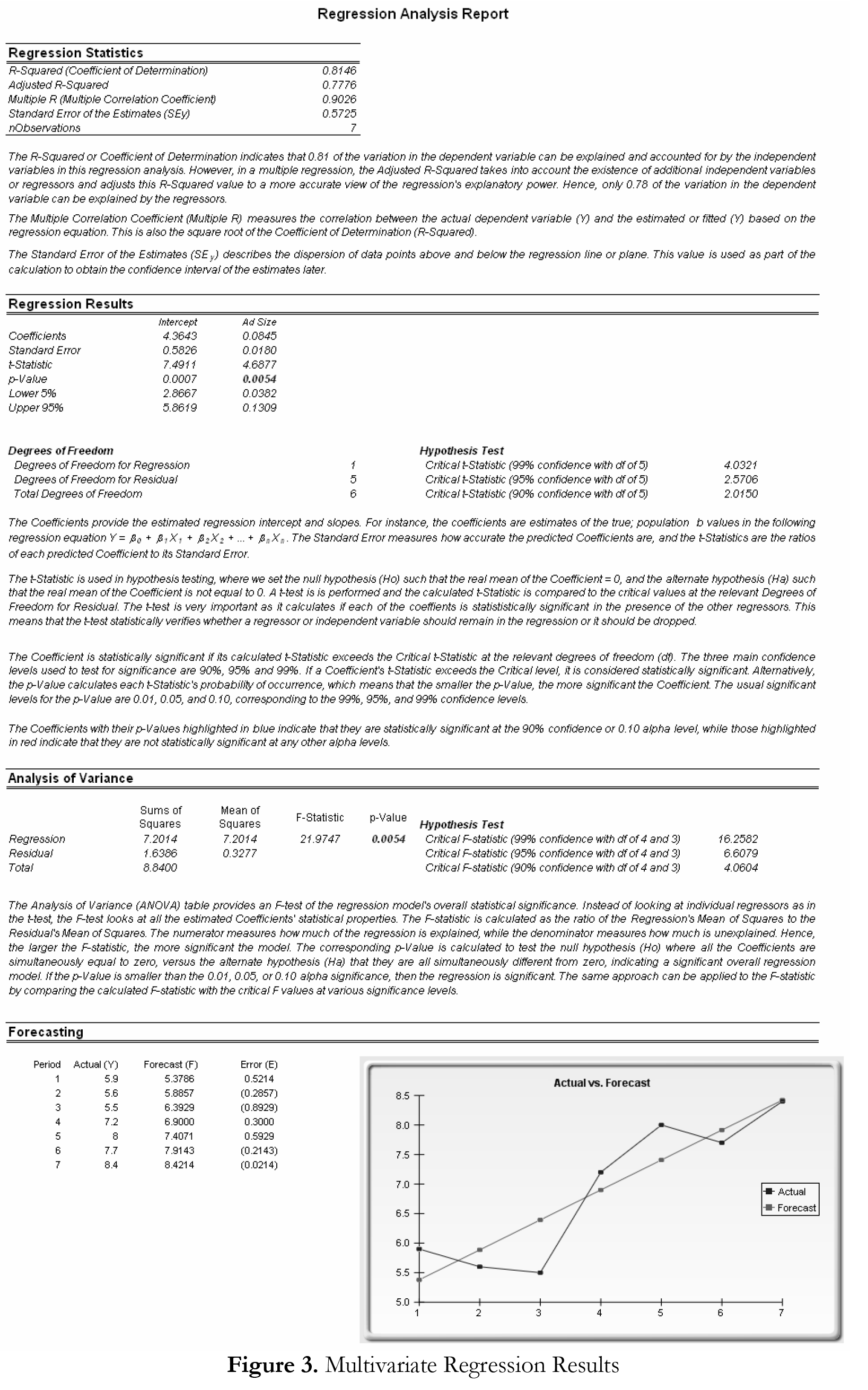

Figure 3 (on the next page) illustrates a sample multivariate regression result report generated. The report comes complete with all the regression results, analysis of variance results, fitted chart, and hypothesis test results.

In “Multivariate Regression, Part 2,” you will learn about a powerful automated approach to regression analysis known as “stepwise regression” and about how goodness-of-fit statistics provide a glimpse into the accuracy and reliability of the estimated regression model.

TO BE CONCLUDED IN “Mulitvariate Regression, Part 2”

Recent Comments